By: Alexander Coplan

Big Brother is always watching, but what if he mistakes you for someone else? The proliferation of facial recognition software throughout the country has provided law enforcement with a valuable tool. These softwares may help locate missing persons or identify the deceased. They can also positively identify people in criminal investigations. However, left largely unregulated, law enforcement’s use of these types of softwares has generated significant concern about its potentially devastating effect on American social life and privacy. Soon, these concerns will have to be addressed.

Background

Facial recognition technologies affect people of color disproportionately. For example, African Americans are more likely to be stopped by law enforcement and be subjected to facial recognition searches than individuals of other ethnicities. This creates major equity issues when facial recognition softwares struggle to accurately identify people of color.

In a study from 2018, researchers from MIT and Microsoft analyzed the efficiency of facial recognition programs from three prominent tech companies: Microsoft, IBM, and Face++. The study found an alarming amount of false positives for women and individuals with darker complexions, with error rates up to 34.7% for those who fall within both categories.

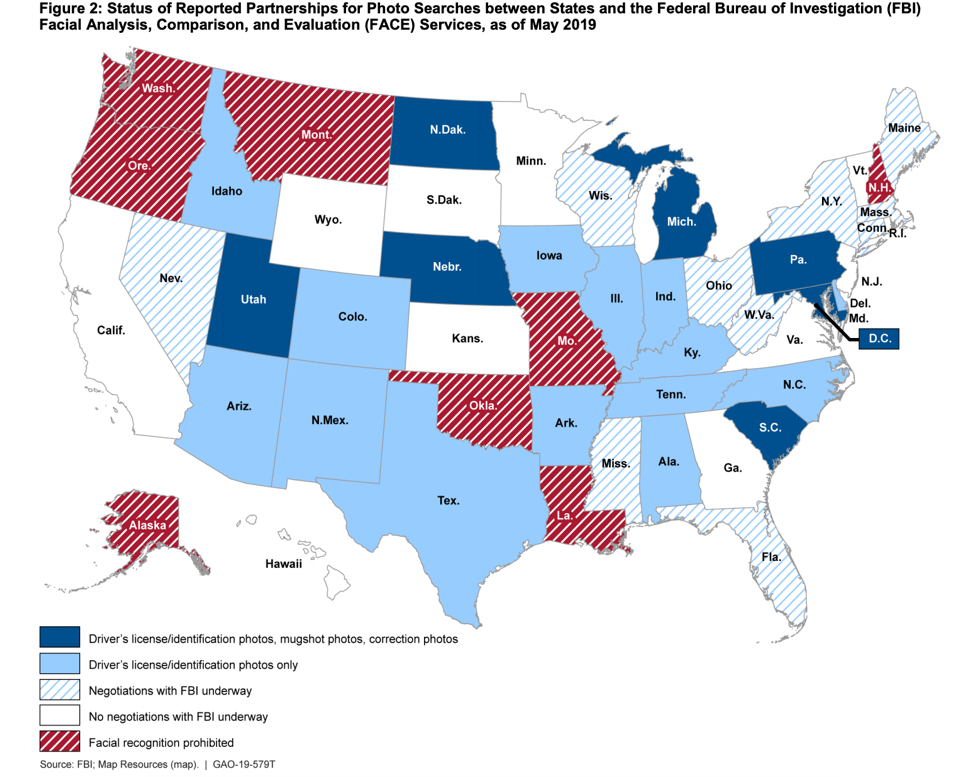

On the federal level, the FBI maintains an internal unit called Facial Analysis, Comparison and Evaluation (FACE) that uses facial recognition software. In developing its photo database, the FBI receives photos of American citizens provided by state agencies. For example, both the FBI and ICE use state Department of Motor Vehicle records to access millions of American’s photos without their consent. As of last year, 21 states cooperate with federal law enforcement agencies, allowing them to scan driver’s license photos. The figure below shows cooperation by state.

Federal agencies are invading our privacy by receiving American’s information without their consent. Meanwhile, even leading tech companies are struggling to develop unbiased algorithms for correctly matching suspects. These are just a few examples of the issues surrounding facial recognition programs.

Facial Recognition in Practice

Few courts have ruled on law enforcement’s use of facial recognition software, and this lack of guidance may have massive impacts on civil liberties. These issues were evident in Lynch v. Florida, a 2018 case in which Willie Allen Lynch was accused of selling $50 worth of crack cocaine, and was subsequently convicted and sentenced to 8 years in prison. In this case, Florida law enforcement took photos of a man selling drugs but were unable to identify him. To help identify the suspect, authorities turned to the FACE system, the same database used by the FBI, and later alleged it was Lynch it the photos.

Florida law enforcement agencies have a robust facial recognition system, and even helped advise the FBI when constructing the Bureau’s own programs. Despite their wealth of data, however, Florida’s reliance upon facial recognition software has raised issues. For example, in Lynch’s case, the software analyst did not know how the program measured a positive identification, admitting she did not know how the program worked. Nevertheless, the analyst forwarded Lynch’s photo—along with 4 others FACE produced as possible matches—to law enforcement. The State used this information, Lynch’s criminal history, and eyewitness testimony to convict him of drug distribution.

Furthermore, law enforcement was not required to disclose the fact that facial recognition software had been used in this case, and Lynch was not allowed to present the evidence at trial. In his defense, Lynch claimed he had been misidentified, and asserted the failure of the State to disclose the photos of other suspects constituted a Brady violation. In a motion for rehearing, Lynch’s public defender wrote, “[i]f any of the photographs of the other potential matches from the facial recognition program resembles the drug seller or Appellant then clearly there was a Brady/discovery violation and Appellant should be granted a new trial.” Following the Supreme Court decision in Brady v. Maryland, prosecutors are required to turn over all exculpatory evidence to the defense. Failure to do so constitutes a “Brady violation.”

Despite Lynch’s claim that this type of violation occurred, the court found otherwise, stating that the defense could not show that the other photos the database returned resembled him. The court also cited the defense’s reluctance to call the analyst who evaluated the photos, because Lynch’s attorney stated on record that the analyst’s testimony would only corroborate the officers’ testimony. As a result, Lynch’s motion was denied.

Without congressional or state guidance, law enforcement agencies are left to decide for themselves how and when to use facial recognition software. As in Lynch’s case, this lack of guidance can have a major impact on an individual’s ability to defend themselves.

Legislative Approach

Congress’ failure to implement national standards for government use of facial recognition software allows for uneven application. Fortunately, Washington’s state government addressed some of the issues surrounding these softwares by passing Senate Bill 6280, which regulates the government’s use of this kind of technology. Going into effect in July 2021, the bill requires Washington’s law enforcement agencies to report on their use of facial recognition technology and routinely test the software for fairness and accuracy. Additionally, addressing an issue in Lynch, the bill also requires the state to disclose its use of facial recognition software on a criminal defendant prior to trial.

Further, companies like Amazon have agreed to suspend selling facial recognition software for a year in hopes that Congress will provide federal regulation. Others have followed suit, with Microsoft refusing to sell its proprietary facial recognition program to police and IBM cancelling their program entirely.

Conclusion

Facial recognition software is widely used throughout the country, and one in two American adults are already in a law enforcement facial recognition network. States like Washington have taken appropriate steps to ensure the privacy of its citizens is secure, but the need for national standards is apparent. The time for congressional regulation is now. Without government action, every American’s equity, privacy, and liberty are at stake.