By: Claire Kenneally

What’s Happening?

Note: This post contains photos of weapons and discusses violence that may be triggering.

The shootings of Renee Good and Alex Pretti incited the nation, as viewers across the country tuned in to the violence in Minneapolis, Minnesota. Both Good and Pretti were protestors killed by U.S. Immigration and Customs Enforcement (ICE) agents employed in Operation Metro Surge– a large scale immigration raid that resulted in the detention over 4,000 documented and undocumented Minnesotans.

But instead of prompting collective grief, the country’s varied reactions only deepened the sense that Americans now inhabit entirely different moral and political universes. In part, this stems from media coverage surrounding the shootings. AI-generated media is accelerating political polarization by reshaping public perception of violent events before facts can stabilize.

Where Does Our News Get Our News?

News networks have always had biases. But the internet and social media have shifted networks’ priorities from well-researched and fact-based journalism to punchy pieces aimed at generating clicks. A study by UCLA Professor Arash Amini described the media’s perpetuation of misinformation as an “arms race in which mainstream media outlets struggle to stand out amid a flood of content.”

When Renee Good was shot by ICE on January 7th, 2026, online sleuths were quick to create and point to video footage of the moments leading up to the shooting. One X user, a self-proclaimed “Trump Loyalist Parody Account” shared an aerial-view photo of Good’s car that quickly gained traction online. The original post did not disclose that the photo was AI, nor that it was generated only to show why the X user personally believed agents should be allowed to use lethal force. A later post admitted the photo was AI-generated, after Snopes and Lead Stories debunked it (in part because the falsified photo included imagery like car doors open that were actually closed, and bystanders in different outfits than they had on in verified video footage). But the original photo, without the subsequent post’s clarification, had already spread across the internet.

Image taken from Twitter user @ScummyMummy511/Max Nesterak/Snopes Illustration

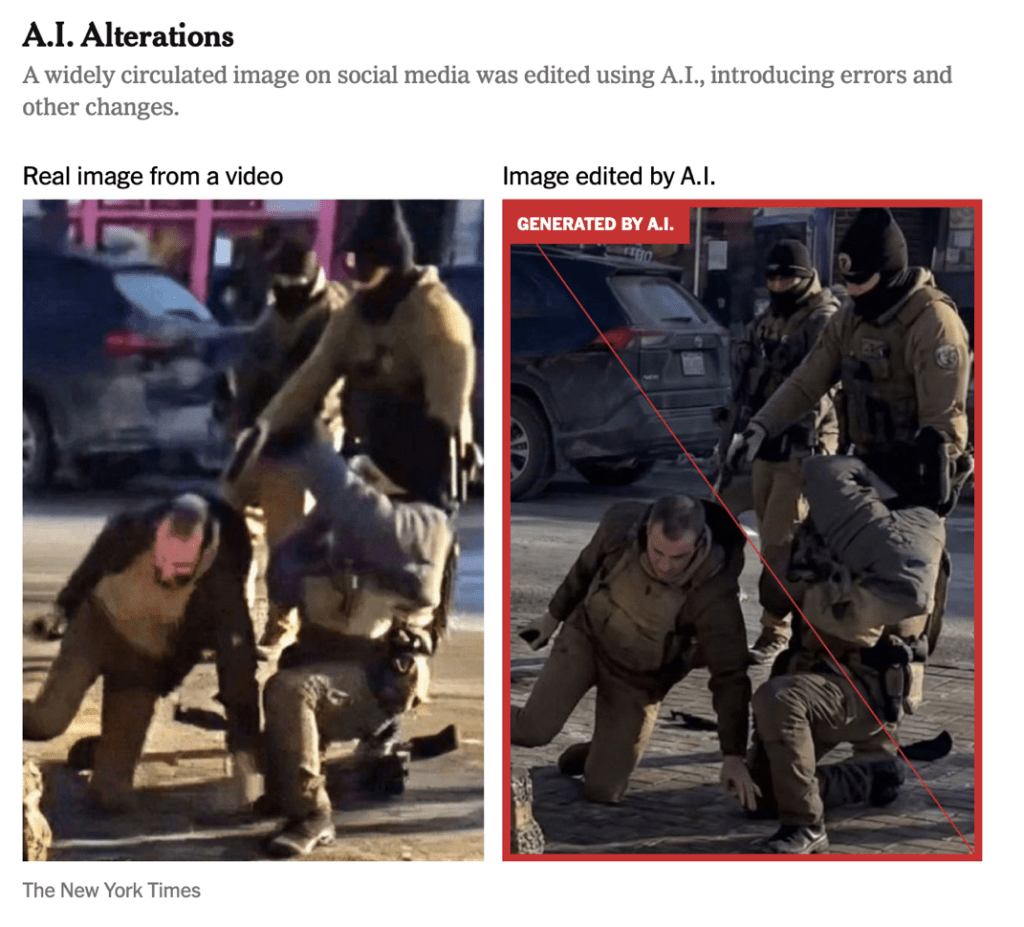

In the wake of Alex Pretti’s death a few weeks later, social media users flooded the internet with AI-generated images of him holding a gun, while video footage of the shooting later revealed him to be holding his cell phone. Other doctored footage circulated inaccurately showed an agent holding a gun to the back of Pretti’s head while he lay prone on the ground.

Photo taken from the January 25th, 2026 New York Times article “False Posts and Altered Images Distort Views of Minnesota Shooting”

Ironically, the AI-enhancement of media to justify a singular political narrative is not a partisan issue. Viewers across the political spectrum suffer when the news they consume is riddled with inaccuracies, even if those inaccuracies confirm their biases. This, in turn, only serves to widen the gap between Americans- how can you converse about an important social issue when you’re each convinced you have the concrete evidence, and that it supports your opinion?

Long-Reaching Implications

In the cases of Good, Pretti, and countless other victims of violence, another troubling aspect in the coverage of their deaths is how their images are reshaped posthumously to fit familiar narratives and biases.

Altered photos of Good were circulated in the wake of her death, claiming she’d posted in celebration of Charlie Kirk’s shooting earlier this year. This led to some Conservative-leaning viewers calling her death “karma”- stoking animosity against her even after the photo’s authenticity was fact-checked and disproven.

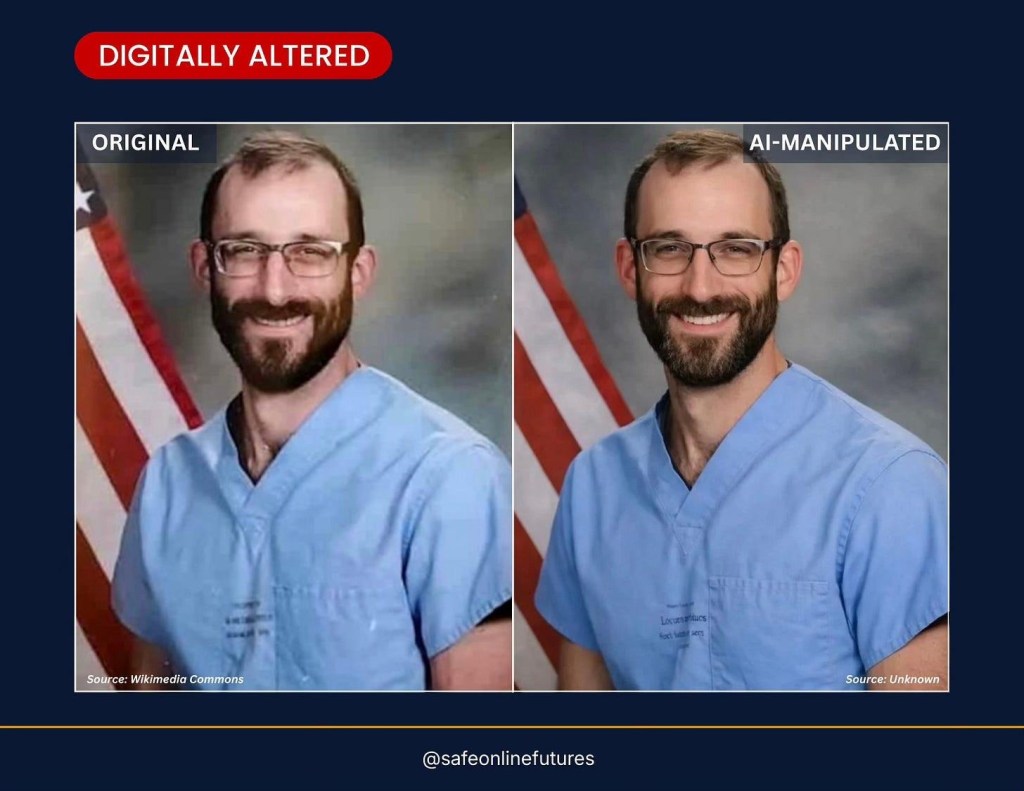

In Pretti’s case, multiple stations accidentally used an AI-doctored photo of him in their initial coverage. Some speculate the edited photo was created to make him appear “less Jewish”. Others suggested it was to make him “. . . a more appealing ‘poster boy’ for the anti-ICE movement.” MSNBC, who used the altered photo, claimed that they merely pulled it from the internet after searching Pretti’s name. Knowing AI’s predilection for generating images that favor whiteness, conventional beaty standards, and imbedded racial biases, it’s unsurprising, but disturbing, how mundane Google Image searches further subtle whitewashing and stereotyping under the guise of algorithmic neutrality.

Photo taken from the January 28th, 2026 New York Post article “AI-altered pic of Alex Pretti is being widely circulated after his killing by Border Patrol”

The Legal Implications

Currently there are few legal protections in place to counteract AI “enhanced” media. The current administration has taken a strong stance against AI regulation, passing Executive Order 14179 in January 23, 2025 (“Removing Barriers to American Leadership in Artificial Intelligence”) and Executive Order 14365 of December 11, 2025 (“Ensuring a National Policy Framework for Artificial Intelligence”). Both orders removed barriers like state-wide AI regulations to “encourage adoption of AI applications across sectors.”

The legislative branch has taken a different approach than the executive. The TAKE IT DOWN Act was signed into law in May 2025 and prohibits the nonconsensual online publication of intimate visual depictions of individuals, both authentic and computer-generated. However, the act focuses on sexual imagery and would not apply to Good or Pretti.

The 2025 proposed NO FAKES Act holds more promise, as it would make individuals and entities legally responsible for creating unauthorized digital copies of a person. The Act is focused on establishing intellectual property rights against AI-generated deepfakes and creating a legal recourse for individuals whose likeness is used without consent. If this bill passes, it could create a way for members of Good and Pretti’s families to sue in civil court for damages.

So What Am I Supposed to Do?

The 24-hour news cycle is not slowing down. But with minimal legal recourse and an executive office that happily generates AI misinformation as freely as fringe conspiracy theorists, sometimes it feels that there’s not much to do but hope Congress acts quickly. However, one intermediate solution is turning to local, community journalism instead of national pundits. In the realm of immigration crackdowns, protest coverage, and updates on Minneapolis, consider:

- Georgia Fort (Journalist)

- Unicorn Riot’s “ICE In Minnesota” Project

- Sahan Journal (a nonprofit newsroom “dedicated to reporting for immigrants and communities of color in Minnesota.”)

In the emerging age of AI narratives and de-regulation, staying truly informed now means choosing intention over speed, resisting easy narratives, and doing the critical thinking ourselves.

This article acknowledges and honors all individuals who have been murdered by ICE and the Department of Homeland Security in 2026: Keith Porter, Parady La, Heber Sanchaz Domínguez, Victor Manuel Diaz, Luis Beltran Yanez-Cruz, Luis Gustavo Nunez Caceres, and Geraldo Lunas Campos, Renee Good, and Alex Pretti. Learn more about their stories here.

#Deepfakes #A.I.Misinformation #WJLTA